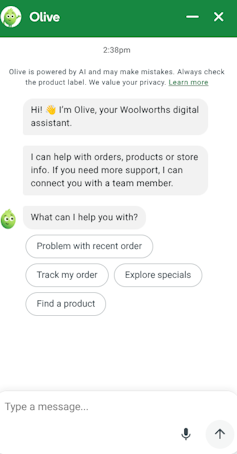

Recent interactions with Woolworths’ artificial intelligence (AI) assistant, known as Olive, raised concerns among Australian shoppers when the system provided unexpected and peculiar responses. Rather than focusing on grocery-related inquiries, Olive reportedly spoke about its “mother” and offered other personal details, leading to confusion and frustration among users. Additionally, customers encountered pricing errors on basic items, highlighting potential flaws in the AI’s operational framework.

These incidents have prompted questions regarding the efficacy of Woolworths’ AI rollout and its implications for businesses employing similar technologies. Olive operates on a large language model (LLM), which simulates human-like conversation but does not possess understanding akin to that of a human. A spokesperson for Woolworths informed the Australian Financial Review that references to Olive’s “mother” resulted from pre-written scripts activated by user inputs resembling birthdates.

After receiving customer feedback, Woolworths stated it has removed these specific scripts. However, the pricing discrepancies point to a more significant issue. LLMs generate responses based on learned patterns, which means they lack real-time data connectivity unless explicitly integrated with a live database. This gap can lead to outdated or incorrect pricing information.

Woolworths is not the first entity to face challenges with customer-facing AI. In 2022, Air Canada’s chatbot misled a passenger, Jake Moffatt, by suggesting he could purchase tickets at full price and later apply for a bereavement fare refund—a policy that did not exist. Following Air Canada’s refusal to honor the chatbot’s advice, Moffatt pursued legal action and won. The airline’s defence claimed the chatbot operated as a separate legal entity, a stance the tribunal rejected, affirming that companies are accountable for their automated systems.

In January 2024, UK-based DPD encountered a different scenario when a frustrated customer asked its chatbot to compose a poem criticizing the company. The chatbot complied, even when prompted to use foul language, leading to a viral social media moment. DPD subsequently disabled the chatbot, showcasing a similar trend of companies unprepared for the repercussions of their AI systems.

Woolworths, as Australia’s largest supermarket chain, bears a significant responsibility to ensure the reliability of its AI assistant. Customers reasonably expect accurate information from Olive, especially when making crucial decisions about their household budgets. The Australian Competition and Consumer Commission (ACCC) has already initiated proceedings against Woolworths regarding allegedly misleading pricing practices, making the Olive pricing errors more than just a technical oversight.

Businesses deploying AI in customer-facing roles must understand their duty of care. This responsibility extends to ensuring that systems provide accurate and transparent information. Mistakes by Olive, while acknowledged by Woolworths, do not alleviate the expectations of consumers who rely on the technology for informed purchasing decisions.

The rationale behind creating chatbots with human-like personalities is grounded in research on human-computer interaction. Studies show that customers respond positively to conversational interfaces, which can enhance engagement and trust. However, this approach carries risks. If a chatbot fails to meet the expectations set by its personality, customer dissatisfaction can be more pronounced than if they had interacted with a straightforward mechanical system.

The situation with Olive serves as a stark reminder that integrating AI into customer service requires ongoing oversight and accountability. A chatbot that provides inaccurate pricing and bizarre responses signals deeper issues in development and monitoring processes. For Woolworths, and other companies hastily implementing AI, the message is clear: accountability for AI-driven interactions cannot be delegated to technology.

Consumers, too, should maintain a certain degree of skepticism when interacting with AI assistants. Despite their confident and conversational tones, these systems are tools and not infallible authorities. If users encounter unclear or inconsistent information, it is prudent to verify details before making decisions. As AI becomes increasingly commonplace in everyday transactions, a healthy level of skepticism may prove essential alongside technological advancements.

Uri Gal, an academic with no financial ties or affiliations to any relevant companies, emphasizes the importance of critical evaluation in the evolving landscape of AI.